Introduction

This content is lecture material for the “AI Regulations and Trustworthiness” course at the Graduate School of Convergence Security (Department of Industrial Security), Chung-Ang University.

As Artificial Intelligence (AI) technology is being adopted across various industries and everyday life, it is no longer sufficient for AI models to work “most of the time.” AI regulation is an essential component for ensuring that AI operates reliably and fairly, building an environment where AI can be used responsibly in human society beyond simply improving performance.

In this article, we aim to explore the current state of AI-related regulations both domestically and internationally and the concept of AI trustworthiness.

Current State of International and Domestic AI Regulations

With the Artificial Intelligence Act (EU AI Act) recently proposed by the European Union (EU), various regulations surrounding AI are now being formally established. Considering the rapid pace of AI technology development, these regulations will also continuously evolve to design a safer and fairer future. Of course, discussions on AI regulation had been ongoing even before the EU AI Act. This section aims to review the trajectory of AI regulation.

Principled Artificial Intelligence (2020)

Source: https://cyber.harvard.edu/publication/2020/principled-ai

The Berkman Klein Center at Harvard University is a research institution that aims to explore and understand cyberspace, studying its development, dynamics, norms, and standards, and addressing challenges such as evaluating the need for laws and sanctions.

The Berkman Klein Center noted that despite the widespread proliferation of “AI principles,” there has been little academic interest in understanding these efforts individually or contextualizing them within the expanding landscape of principles with discernible trends.

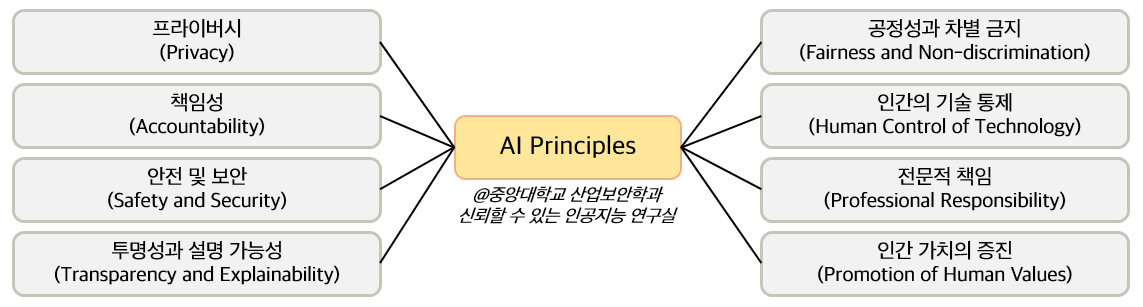

To extract and classify the characteristics of AI principles, this report compared the contents of 36 major AI principles documents side by side and derived common themes across 8 key topics as follows.

A brief description of the 8 key themes is as follows.

- Privacy: This theme addresses the principle that AI systems must respect individual privacy. It encompasses (1) the data used in development and (2) the agency of people affected by decisions made with that data. The privacy principle was included in 97% of the analyzed documents.

- Accountability: This theme addresses the importance of mechanisms for appropriately distributing the impacts of AI systems and providing adequate remedies. The accountability principle was included in 97% of the documents.

- Safety and Security: This addresses the requirement that AI systems must operate safely as intended and be secure against unauthorized access. The safety and security principle was included in 81% of the documents.

- Transparency and Explainability: This theme addresses the principle that AI systems should be designed and implemented to be auditable, that the operations of the system should be translated into understandable outputs, and that information about where, when, and how systems are used should be provided. The transparency and explainability principle appeared in 94% of the documents.

- Fairness and Non-discrimination: In a context where AI bias is already having a worldwide impact, the fairness and non-discrimination principle states that AI systems should be designed and used to maximize fairness and promote inclusivity. This principle was found in 100% of the documents.

- Human Control of Technology: This addresses the need for important decisions to still undergo human review. This principle was included in 69% of the documents.

- Professional Responsibility: This theme includes the principle that those involved in the development and deployment of AI systems play a critical role in the system’s impact, and that appropriate stakeholders should be consulted and long-term impacts planned for. The professional responsibility principle was included in 78% of the documents.

- Promotion of Human Values: This addresses the principle that the purposes AI serves and the manner in which it is implemented should reflect our core values and overall promote human well-being. The promotion of human values principle appeared in 69% of the documents.

The Berkman Klein Center assigned a list of 47 extracted principles to each theme and identified closely connected principles within each document. This work holds great significance in conducting a broad analysis of AI regulation and analyzing the key principles.

Rome Call for AI Ethics (2020)

Source: https://www.romecall.org/

In 2020, the Roman Curia drafted a document to promote an ethical approach to Artificial Intelligence (AI). The title of this document is the “Rome Call for AI Ethics.” The core idea of this document is to ensure that international organizations, governments, institutions, and technology companies share joint responsibility, creating a future where digital innovation and technological advancement guarantee the central position of human beings.

This document was signed by five individuals including Archbishop Vincenzo Paglia (President of the Pontifical Academy for Life) and Brad Smith (President of Microsoft). It calls for AI to be developed in accordance with principles that serve the entire “human family” (a concept mentioned in the preamble of the Universal Declaration of Human Rights), respecting the dignity of every individual and the natural environment.

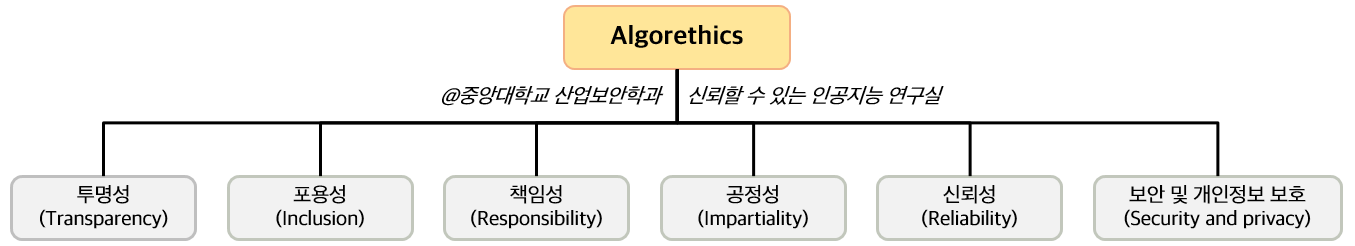

This Call proposed six principles under “algorethics” based on three goals (ethics, education, rights).

- Transparency: AI systems must be explainable.

- Inclusion: Considering the needs of all human beings, everyone should benefit and each individual should be provided with the best conditions to express themselves and develop.

- Responsibility: Those who design and deploy AI must proceed with accountability and transparency.

- Impartiality: AI systems must maintain fairness without bias and protect human dignity.

- Reliability: AI systems must operate in a trustworthy manner.

- Security and Privacy: AI systems must operate safely and respect users’ personal information.

EU Artificial Intelligence Act (2021)

This is the world’s first comprehensive AI regulation law published by the European Union (EU).

After the initial proposal of the bill on April 21, 2021, it was passed at the plenary session on March 13, 2024, and entered into force on August 1, 2024. The first measures take effect from December 2024, with most provisions scheduled for enforcement in August 2026.

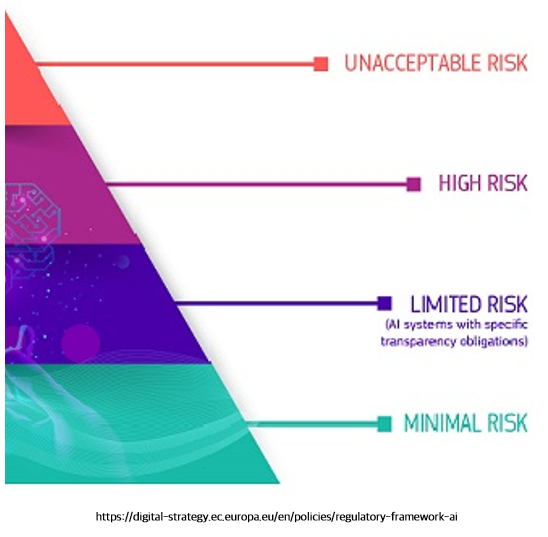

A key feature is the regulation of AI systems according to risk levels. Risk levels are divided into four tiers as follows.

Among these, the top two systems are either (1) prohibited or (2) required to comply with most regulatory provisions.

At the top of the pyramid are Prohibited AI systems. The following 8 types of systems, including social scoring systems and manipulative AI, are permanently banned.

- Distortion of decision-making

- Exploitation of vulnerabilities of specific groups

- Social scoring

- Crime prediction using profiling

- Facial recognition databases

- Emotion inference in workplaces/educational institutions

- Biometric categorization

- Real-time remote biometric identification

Note: Exceptions exist for each category

The second tier of the pyramid is High-risk AI systems, and most regulatory provisions apply to these systems. Two broad categories of products and components are classified as high-risk systems.

- Products and components listed in Annex I of the EU legislation (industrial machinery, agricultural machinery, lifting equipment, toys, radios, wireless devices, goggles, masks, etc.) that require third-party conformity assessment

- Products and components listed in Annex III of the EU legislation: biometrics, critical infrastructure, education and vocational training, worker management and access to self-employment, access to and enjoyment of essential private and public services, law enforcement, migration, asylum and border control management, administration of justice and democratic processes

Note: Exceptions exist for each category

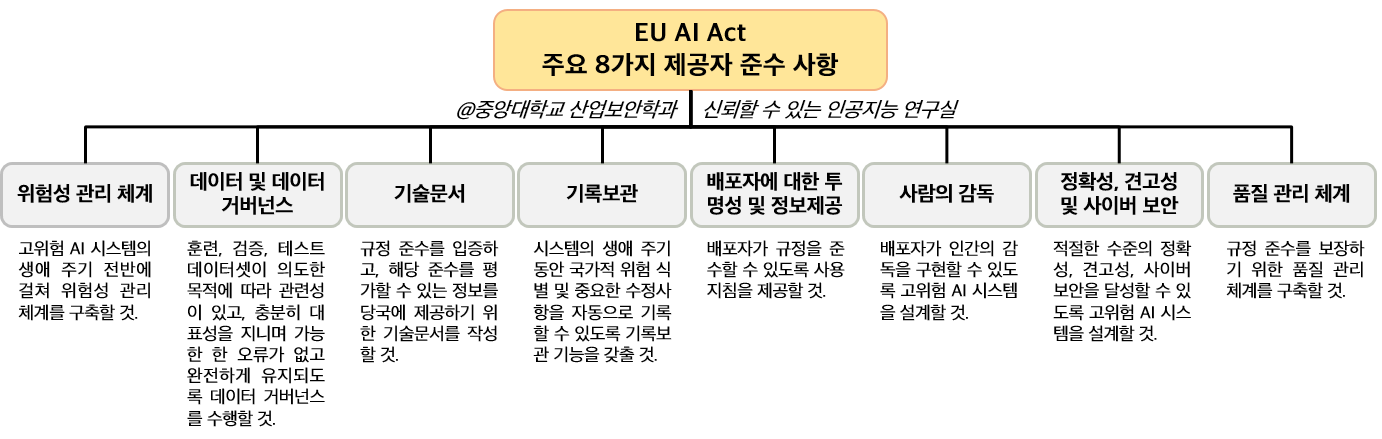

Providers offering high-risk systems and deployers deploying them are subject to numerous obligations under the law. These apply to anyone who places a high-risk AI system on the EU market or provides services, whether they are located within the EU or in a third country. In particular, providers have the highest level of compliance requirements, with the following 8 key obligations.

Other stakeholders (authorized representatives, importers, distributors, etc.) are also subject to obligations and compliance requirements. In particular, stakeholders related to General Purpose AI (GPAI) receive special treatment and are subject to oversight by the EU AI Office.

Failure to fulfill the above obligations results in fines according to the following.

- Fines for violating AI practice prohibitions: Up to 35 million euros (approximately 525 billion KRW) or 7% of global turnover for violations of prohibited AI practices.

- Fines for other regulatory violations: Up to 15 million euros (approximately 225 billion KRW) or 3% of global turnover for violations by providers, importers, deployers, and others.

- Fines for providing incorrect information: Up to 7.5 million euros (approximately 112 billion KRW) or 1% of global turnover for providing incorrect information.

The EU AI Act also includes provisions for regulatory sandboxes, the establishment of an AI Office, the creation of an EU database, and the construction of monitoring systems.

US AI Regulations (USA AI Acts)

The United States has been enacting AI-related legislation since the 2020 ‘National Artificial Intelligence Initiative Act.’ This act aimed to advance AI research and development and develop trustworthy AI systems, establishing advisory committees and founding and supporting a National Artificial Intelligence Research Institute.

Source: https://www.congress.gov/bill/116th-congress/house-bill/6216

Subsequently, through the 2022 ‘Artificial Intelligence Training for the Acquisition Workforce Act’ and the 2022 ‘Advancing American AI Act,’ the government mandated AI training programs and use case analysis. Most recently, the 2023 ‘Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence’ made it clear that the U.S. administration has set safe and responsible AI development and management as a top priority.

Source: https://www.whitehouse.gov/briefing-room/presidential-actions/2023/10/30/executive-order-on-the-safe-secure-and-trustworthy-development-and-use-of-artificial-intelligence/

This document mentions a total of 8 principles, including safety and security, promoting responsible innovation, consumer protection, and protecting privacy and civil liberties.

Domestic AI Regulations (South Korea)

The South Korean government is also engaging in discussions and reviews on AI-related regulations in line with these global regulatory trends. Recently, the Future Legislation Innovation Planning Group published “Trends in Domestic and International AI-related Legislation,” which summarized the situation as follows.

Source: https://www.moleg.go.kr/boardDownload.es?bid=legnlpst&list_key=3813&seq=1

- A total of 13 AI-related bills were introduced in the 21st National Assembly, of which 9 were proposed for the purpose of promoting and regulating AI itself.

- Among current laws that include the term “artificial intelligence,” 23 laws substantively regulate AI (e.g., Personal Information Protection Act, Public Official Election Act, etc.)

In addition, the government has been actively reviewing domestic AI regulations through the ‘AI Law, System, and Regulatory Reform Roadmap (Dec. 2020),’ the ‘Financial Sector AI Guidelines (Jul. 2021),’ and most recently the ‘Plan for Establishing a New Digital Order (May 2024).’

Additionally, the government has presented the following guidelines in collaboration with joint agencies.

- Guide for Developing Trustworthy AI (Feb. 2023) - Telecommunications Technology Association (research outcome of the Ministry of Science and ICT’s “Enhancement of AI Trustworthiness Verification System” project)

- Security Guidelines for Using Generative AI such as ChatGPT (Jun. 2023) - National Intelligence Service and National Security Research Institute

Conclusion

In today’s rapidly advancing AI landscape, the necessity of regulation is more emphasized than ever. For AI to establish itself as a trustworthy technology in human society, going beyond mere performance improvement, the role of regulation is essential. To satisfy these regulations, AI trustworthiness must be achieved so that AI systems can reflect regulatory requirements and self-correct and improve accordingly.