Paper: Evaluating practical adversarial robustness of fault diagnosis systems via spectrogram-aware ensemble method

Authors: Hoki Kim, Sangho Lee, Jaewook Lee, Woojin Lee, Youngdoo Son

Venue: Engineering Applications of Artificial Intelligence 130(2024)

Introduction

As the complexity of modern industry has increased, production efficiency has been enhanced and the ability to accommodate diverse customer demands has grown. However, problems arising in bearing fault diagnosis systems have also increased, making monitoring systems for identifying malfunctions and failures increasingly important.

Data-driven fault diagnosis techniques are trained using collected data and are used to prevent future failures that may occur during system operation. The machine learning techniques used in this context are vulnerable to subtle noise, which means they are susceptible to adversarial attacks. Therefore, understanding how robust fault diagnosis models are against adversarial attacks is a critically important factor.

Previously, research focused on adversarial attacks in white-box models, but in practice, models are black-box models that are not disclosed for security reasons. In other words, the experimental setting does not match the actual industrial environment. Additionally, adversarial attacks on black-box models have the problem of overestimating robustness. Therefore, this paper aims to address the problems that arose in prior research by incorporating domain information in the form of spectrograms into adversarial examples in a black-box environment.

Background

About Bearings

Bearings consist of an inner race, outer race, balls, and cage, and serve as fundamental components in industrial engineering systems.

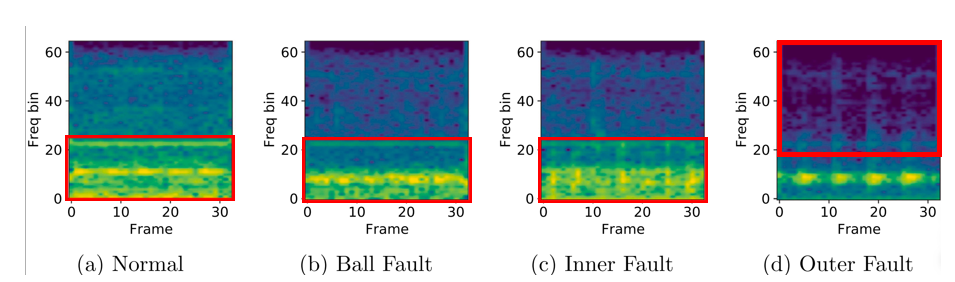

Each component can contain various fault conditions. The CWRU dataset, which includes inner race faults, outer race faults, ball faults, and normal conditions, is used as training data for bearing fault diagnosis systems.

The bearing fault diagnosis model takes vibration signals from rotating machinery as input and machine conditions as output, using cross-entropy as the loss function as shown in the following equation:

\[\begin{equation} \mathcal{L}_{CE}(f(\mathbf{x}), \mathbf{y}) = - \sum_{i=1}^{C} y_i \log(f(\mathbf{x})_i), \quad \text{for } C \text{ classes} \end{equation}\]The objective of the model is to minimize the following loss function:

\[\begin{equation} \arg\min_{f} \mathcal{L}(f(x), y) \end{equation}\]Vibration signal x is inherently in waveform, and prior research has revealed the usefulness of the frequency domain for more effectively diagnosing bearing faults. In particular, when a bearing fault occurs, periodic impulse responses arise in specific frequency bands, enabling accurate fault analysis using that frequency information. The Fourier Transform is the method for converting time-domain signals to frequencies, and it has been extended to the Short-Time Fourier Transform (STFT) to incorporate temporal information.

Spectrogram

Conventional FFT can analyze the overall frequency components of a signal, but has the disadvantage of being unable to capture frequency patterns that change over time. The spectrogram can analyze frequency changes over time, enabling detailed identification of bearing fault characteristics. Using spectrograms as input to CNN (Convolutional Neural Network) models better reflects the characteristics of bearing faults compared to STFT-based approaches, improving the accuracy of bearing fault diagnosis.

Adversarial Attacks

Adversarial attacks are methods that generate malicious noise or small perturbations to deceive machine learning models. That is, given f, the following optimization problem is maximized:

\[\begin{equation} d(x; w_u) = D_{KL} \big( p(f(x; w_o)) \parallel p(f(x; w_u)) \big) \end{equation}\]In other words, the goal of adversarial attacks is to deceive the model into changing the correct label y of the original sample x. Well-known examples of adversarial attacks include FGSM (Fast Gradient Sign Method) and PGD (Projected Gradient Descent).

\[\begin{equation} \delta = \epsilon \cdot \text{sign}(\nabla \mathcal{L}(f(x + \delta), y)) \end{equation}\]FGSM, as shown in the equation above, is a method that rapidly generates adversarial noise using gradients, producing noise in a single step following the gradient of the loss function. PGD is a multi-step version of FGSM that generates stronger adversarial examples by updating gradients multiple times rather than just once.

\[\begin{equation} \delta(t+1) = \Pi \left[ \delta(t) + \alpha \cdot \text{sign}(\nabla \mathcal{L}(f(x + \delta(t)), y)) \right] \end{equation}\]This equation describes the process of updating adversarial noise at step t+1, where the gradient direction of the loss function at each step t is computed, moved by alpha, and the noise is constrained not to exceed epsilon.

Adversarial Attacks and Black-Box Models

Simple algorithms such as FGSM and PGD can effectively degrade machine learning model performance across various domains. In particular, it has been confirmed that adversarial attacks are also effective against bearing fault diagnosis systems.

A white-box environment assumes that the attacker has complete information about the trained model (model architecture, weights, gradient information). However, since FGSM and PGD ultimately perform attacks by utilizing gradient information based on the loss function, they require knowledge of the internal information of model f to be effective.

Therefore, attacks must be performed in a black-box environment. In general, trained models are more often not publicly available, and especially for industrial fault diagnosis systems, models are not provided in directly accessible forms but can only be accessed by providing input through IoT devices.

Adversarial attacks in black-box settings proceed via transfer attacks as follows:

\[\begin{equation} f(x') \neq y, \quad x' = x + \arg\max \mathcal{L}(g(x + \delta), y) \end{equation}\]Here, g is the source model—a publicly available model or a model trained by the attacker. If targeting an industrial fault diagnosis model of a specific company, a publicly available neural network model from that domain can be used as the source model.

Adversarial noise is optimized on the source model to maximize the source model’s loss, generating adversarial examples that are then fed to the target model f.

In a white-box environment, model robustness can be measured precisely, but in a black-box setting, this is not directly possible, so 1-P (P = attack success rate) is used as an approximation. However, when simply applying existing FGSM and PGD techniques to a black-box environment, practical robustness is overestimated, making it difficult to accurately assess how vulnerable the target model is in real-world settings.

Domain-Specific Information for Adversarial Attacks

Prior research has demonstrated that adversarial attacks incorporating domain-specific information are effective in black-box environments. In particular, applying common visual transformations T during the adversarial example optimization process improves attack performance, as shown in the following equation:

\[\begin{equation} \max \mathcal{L}(g(T(x + \delta)), y) \end{equation}\]Furthermore, leveraging acoustic domain knowledge by adding continuous noise during the attack process has been shown to improve attack performance. While various studies have investigated adversarial attacks in black-box environments, no research currently exists that evaluates the practical robustness of industrial fault diagnosis systems in black-box settings. Therefore, this paper proposes a novel attack technique that applies spectrum transformations during the adversarial attack optimization process.

As shown in the figure above, spectrum information provides strong domain characteristics in bearing fault diagnosis systems, enabling more precise robustness evaluation of models than previously possible. This paper proposes a novel regularization technique that manipulates spectrum information during the adversarial example generation process in a black-box environment, as expressed in the following equation:

\[\begin{equation} \mathcal{L}_{SC}(x, x^*, \delta) = 1 - \cos(\text{Spec}(x + \delta), \text{Spec}(x)) + \max\left( 0, \cos(\text{Spec}(x + \delta), \text{Spec}(x^*)) - \gamma \right) \end{equation}\]This equation is a cosine embedding loss function that optimizes so that the cosine similarity between the source model’s noise and the original model is minimized, while the cosine similarity between the source model’s noise and the target model’s noise is maximized. Additionally, cross-entropy and cosine embedding loss are combined to update the adversarial noise as shown in the following equation:

\[\begin{equation} \ \delta^{(t+1)} = \Pi_{\|\delta\| \leq \epsilon} \left[ \delta^{(t)} + \alpha \cdot \text{sign} \left( \nabla_{\delta} \left( \mathcal{L}_{CE}(g(x), y) + \beta \mathcal{L}_{SC}(x, x^*, \delta) \right) \right) \right] \ \end{equation}\]Spectrogram-Based Ensemble Method

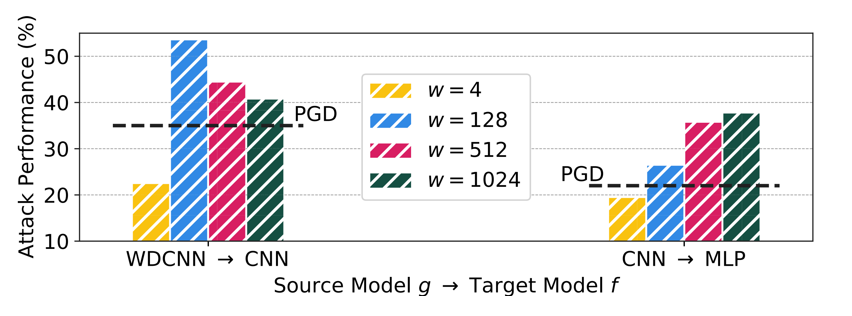

The spectrogram has a strong influence on the cosine embedding loss and therefore plays an important role in the optimization process. This paper additionally analyzes the impact of window size on adversarial noise generation performance. The window determines the balance between frequency resolution and time-frequency characteristics. A small window results in higher time resolution but loss of frequency information, while a large window provides higher frequency resolution at the expense of temporal information—a trade-off.

The figure above analyzes the attack success rate for various source-target model combinations across different window sizes. When the window is too small, attack performance is actually lower than PGD. However, when the source model is WDCNN (Wide Deep Convolutional Neural Network) and the target model is CNN, a window size of 128 yields the highest attack performance, while when the source model is CNN and the target model is MLP (Multi-Layer Perceptron), a window size of 1024 yields the highest attack performance. This indicates that the optimal window value varies depending on the source-target model combination. Therefore, this paper proposes considering multiple window values simultaneously rather than fixing the window to a single value.

\[\begin{align} \mathcal{L}_{SC}(x, x^*, \delta; w) := 1 & -\cos(\text{Spec}(x + \delta; w), \text{Spec}(x; w)) \\&+ \max \left( 0, \cos(\text{Spec}(x + \delta; w), \text{Spec}(x^*; w)) - \gamma \right) \end{align}\]As shown in this equation, by utilizing spectrogram information together with window information, the optimization maximizes the difference between the original model and the source model for each window size while minimizing the difference with the target model. This multi-window size approach is named SAEM (Spectrogram-Aware Ensemble Method), which leverages domain knowledge based on cosine embedding loss and combines it with cross-entropy loss to generate optimal adversarial signals that deceive the model.

Experiment

This paper demonstrates through experiments the limitations of PGD attacks and that SAEM achieves superior attack performance in black-box environments.

Results

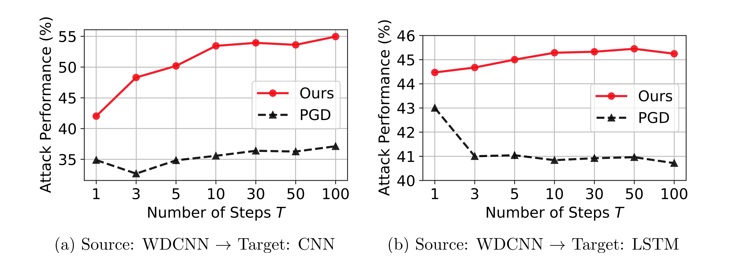

The following experiment calculates the attack success rates of SAEM and PGD for different target model combinations.

As shown in the graph, SAEM’s attack success rate increases as the number of steps increases, demonstrating significantly higher attack success rates compared to PGD.

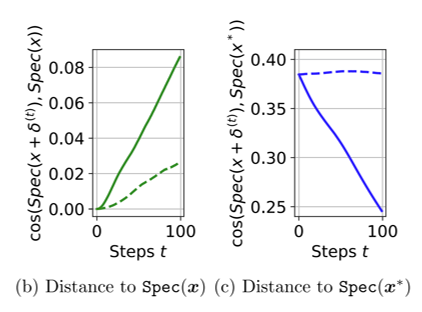

The following experiment investigates whether the spectral information difference between adversarial examples generated by PGD and SAEM and the original examples has been maximized.

As shown in the graph, the spectrum distance between adversarial examples and original examples increases more rapidly for SAEM, while PGD fails to maximize spectrum information. Additionally, (c) shows that SAEM successfully minimizes the distance between the target model and the source model on the spectrum manifold.

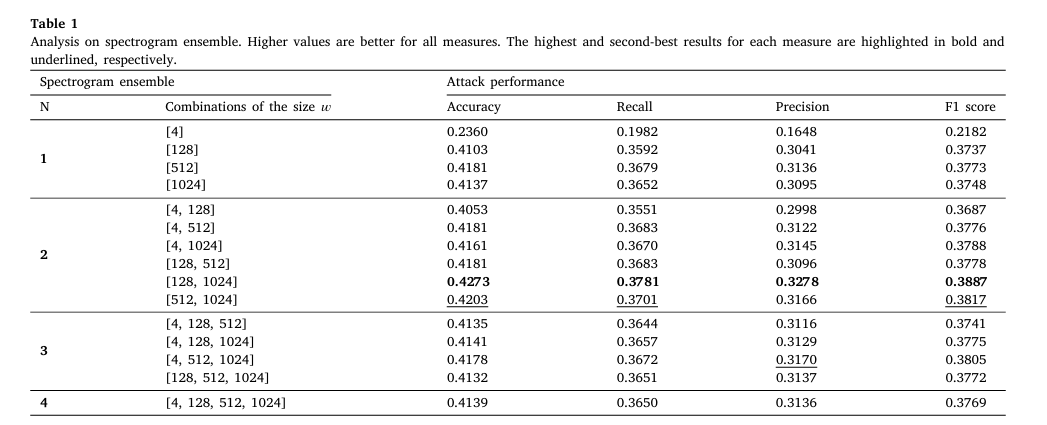

The following experiment compares model attack performance across different spectrogram ensemble combinations.

As shown in the graph, the window combination of [128, 1024] achieves the highest attack success rate, and on average, combining a small window size with a large window size is advantageous for generating malicious adversarial examples.

Conclusion

SAEM achieves superior attack performance in black-box environments. Additionally, it is necessary to explore the practical environments of industrial fault diagnosis systems and identify potential risk factors that may arise in industrial settings. Furthermore, research on defense mechanisms utilizing spectrum information is needed to improve the robustness of fault diagnosis systems, along with research in practical environments that includes the accessibility of training datasets.