Paper: Position: Current Model Cards Are Insufficient for Downstream Governance of Open-Weight Foundation Models

Authors: Sungwon Chae, Keonwoo Kim, Hoki Kim, Jaeyeon Ju, Sangchul Park

Venue: ICML 2026

Introduction

The diffusion of generative AI and foundation models has moved beyond a purely technical concern and become a governance problem — a question of how society, law, and policy should structure responsibility for systems that anyone can download and reuse. Open-Weight Foundation Models (OWFMs) epitomize this tension: they offer the virtues of openness and reproducibility, yet once their weights are public, anyone can fine-tune, distill, or redistribute them, making downstream accountability inherently difficult.

For the past five years, the dominant response to this challenge has been the Model Card (Mitchell et al. 2019), a standardized document describing intended use, evaluation, limitations, and ethical considerations. Model cards have become the de facto transparency standard. The question this paper raises is therefore sharp:

“How have OWFM developers actually operationalized downstream governance? What are the limitations, and how should the framework be reformed?”

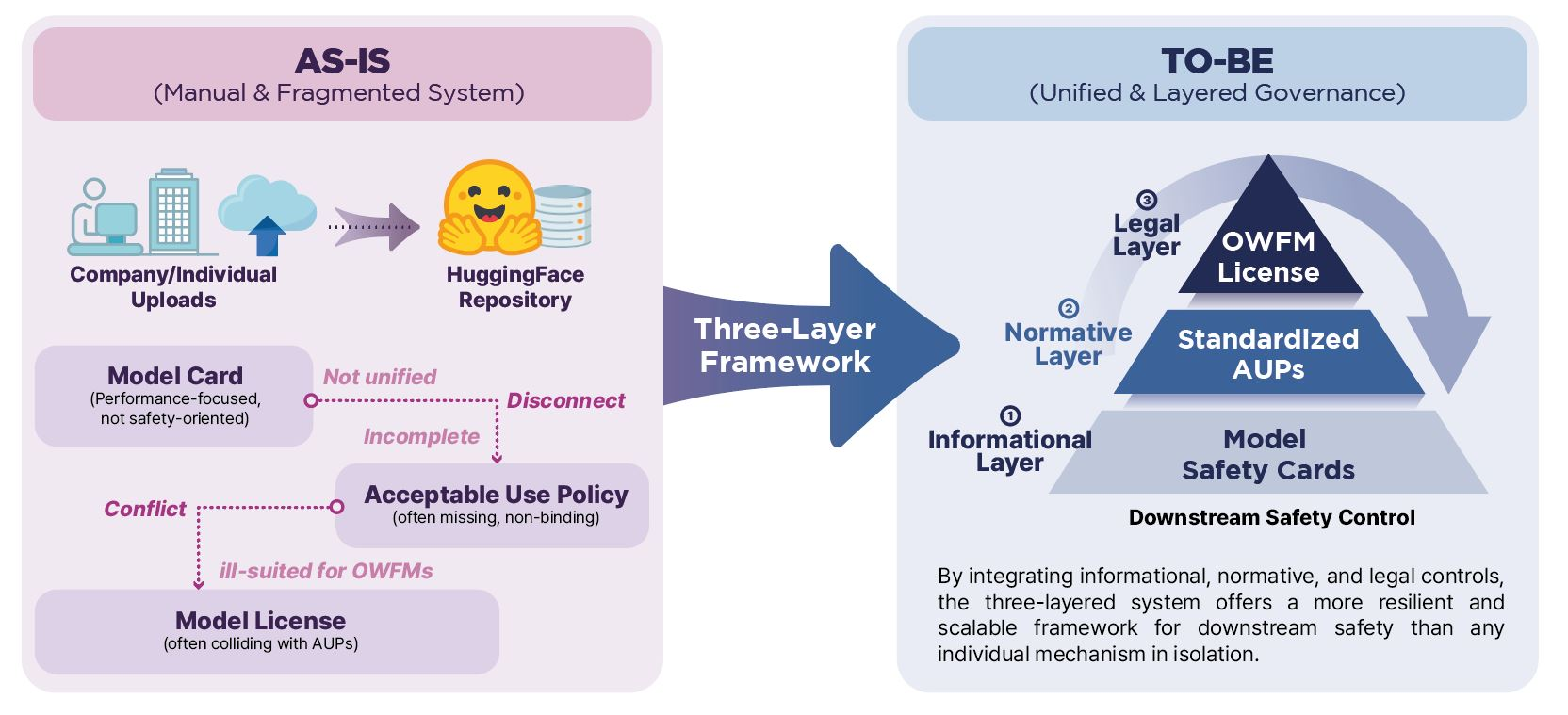

“Position: Current Model Cards Are Insufficient for Downstream Governance of Open-Weight Foundation Models” is a position paper from our lab accepted to ICML 2026. It shows empirically that model cards alone cannot guarantee downstream governance, and proposes a Three-Layer Governance Framework that integrates Informational, Normative, and Legal artifacts into a single coherent regime. This article walks through the diagnosis and the prescription.

Preliminary

Model Card

A model card documents an AI model’s specification — intended use, training data, evaluation metrics, known limitations, ethical considerations, and environmental impact. Since Mitchell et al. (2019), model cards have been adopted as the standard metadata layer at Hugging Face, Kaggle, and TensorFlow Hub. They are the central instrument of Informational governance — the layer this paper interrogates.

Acceptable Use Policy (AUP)

An Acceptable Use Policy is a normative document that specifies what uses of a model are permitted or forbidden. Meta’s Llama 3 AUP, for instance, prohibits military applications, weapons development, exploitation of minors, discriminatory targeting, and impersonation. AUPs sit in tension with the broad use grants of OSI-approved licenses, which makes them the central instrument of Normative governance.

Model License

Model weights are themselves artifacts and admit licensing. However, conventional Open-Source Licenses (OSL) such as Apache 2.0, MIT, or BSD were designed for source code and object code. Model weights are neither code nor data but a third object — learned parameters — whose copyrightability is itself legally ambiguous. Models also produce a vast cascade of derivative artifacts: outputs, fine-tunes, distillations, quantizations. None of these derivative pathways is explicitly addressed by traditional OSLs.

Main Results

Empirical Audit of 500 Hugging Face Models

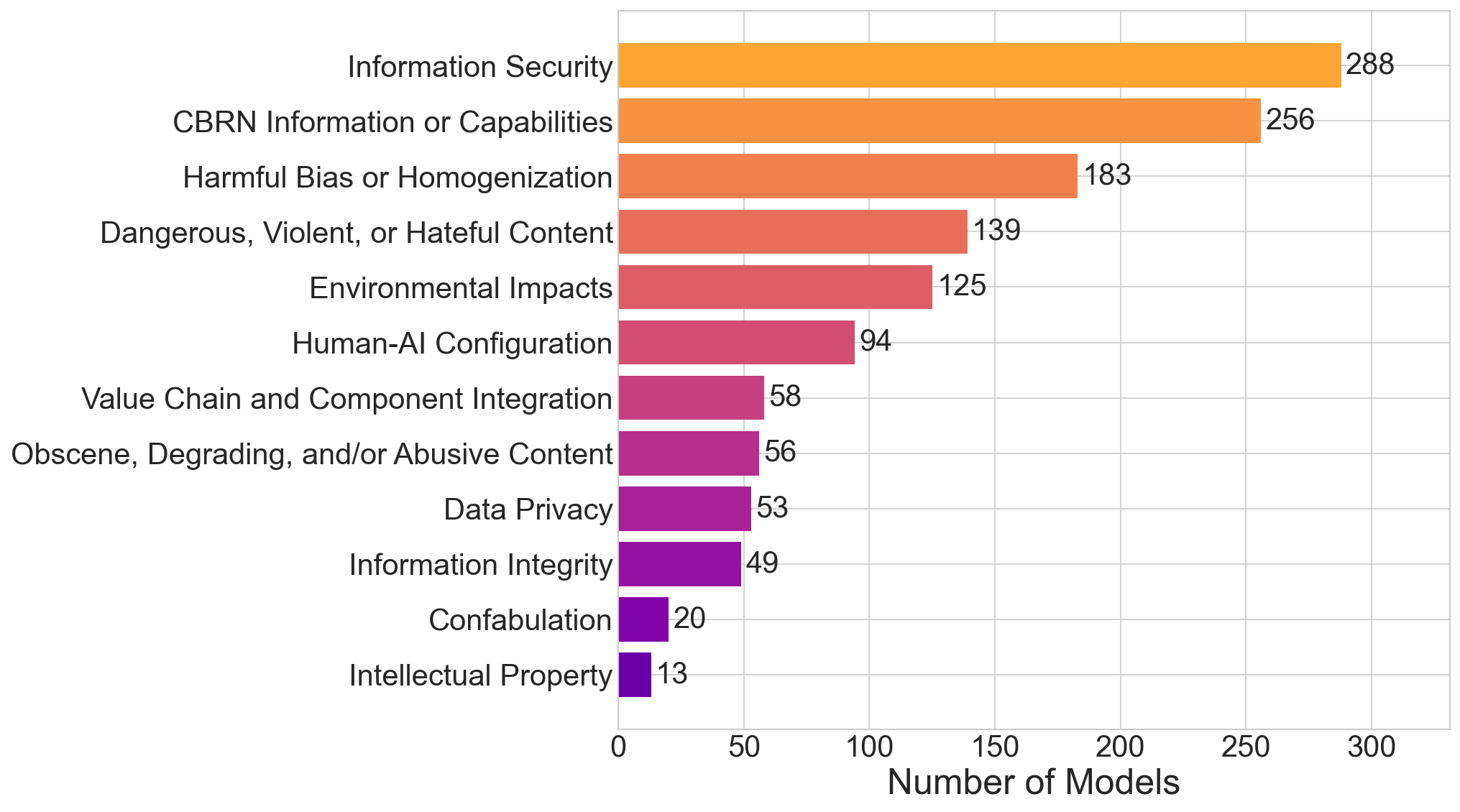

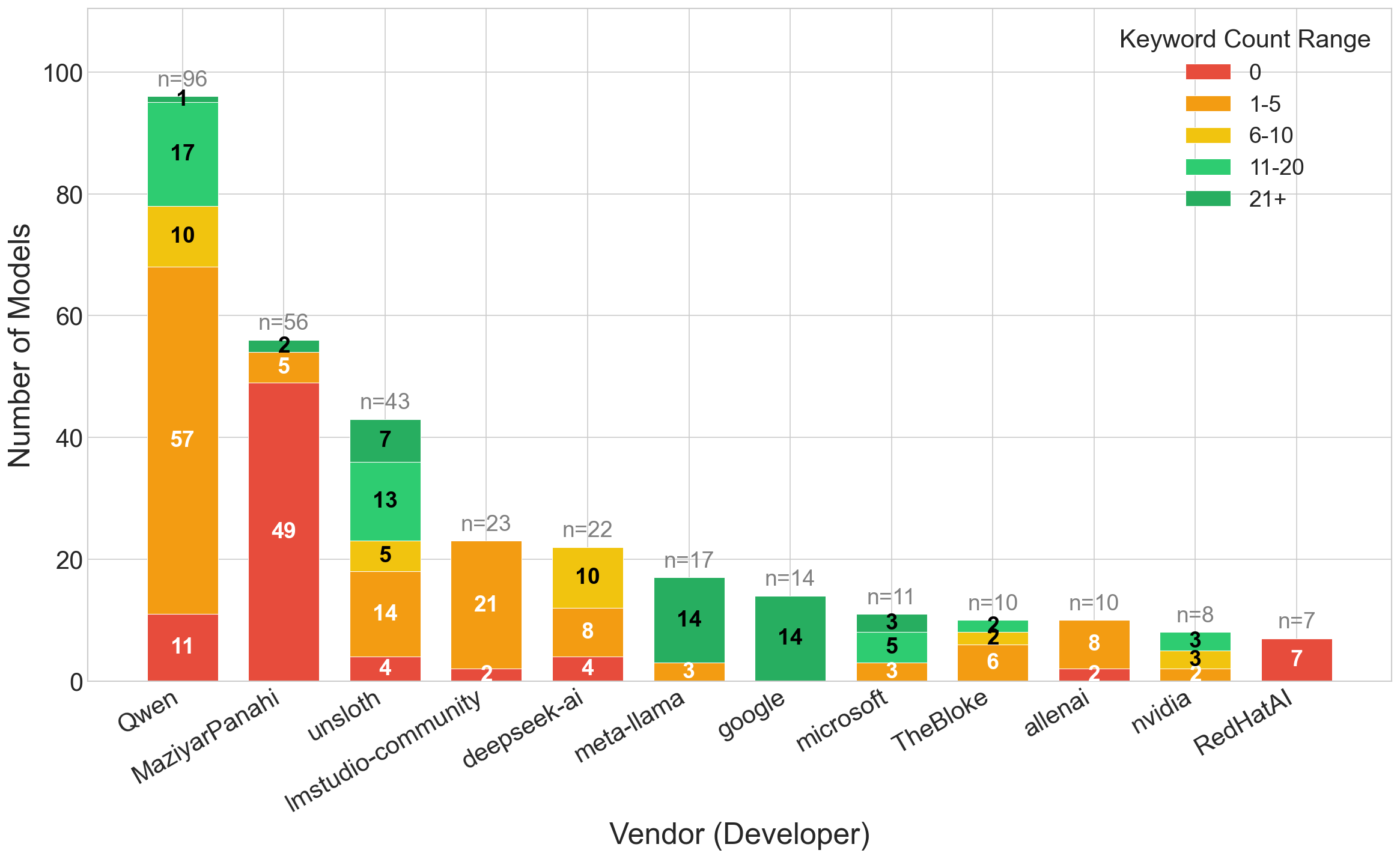

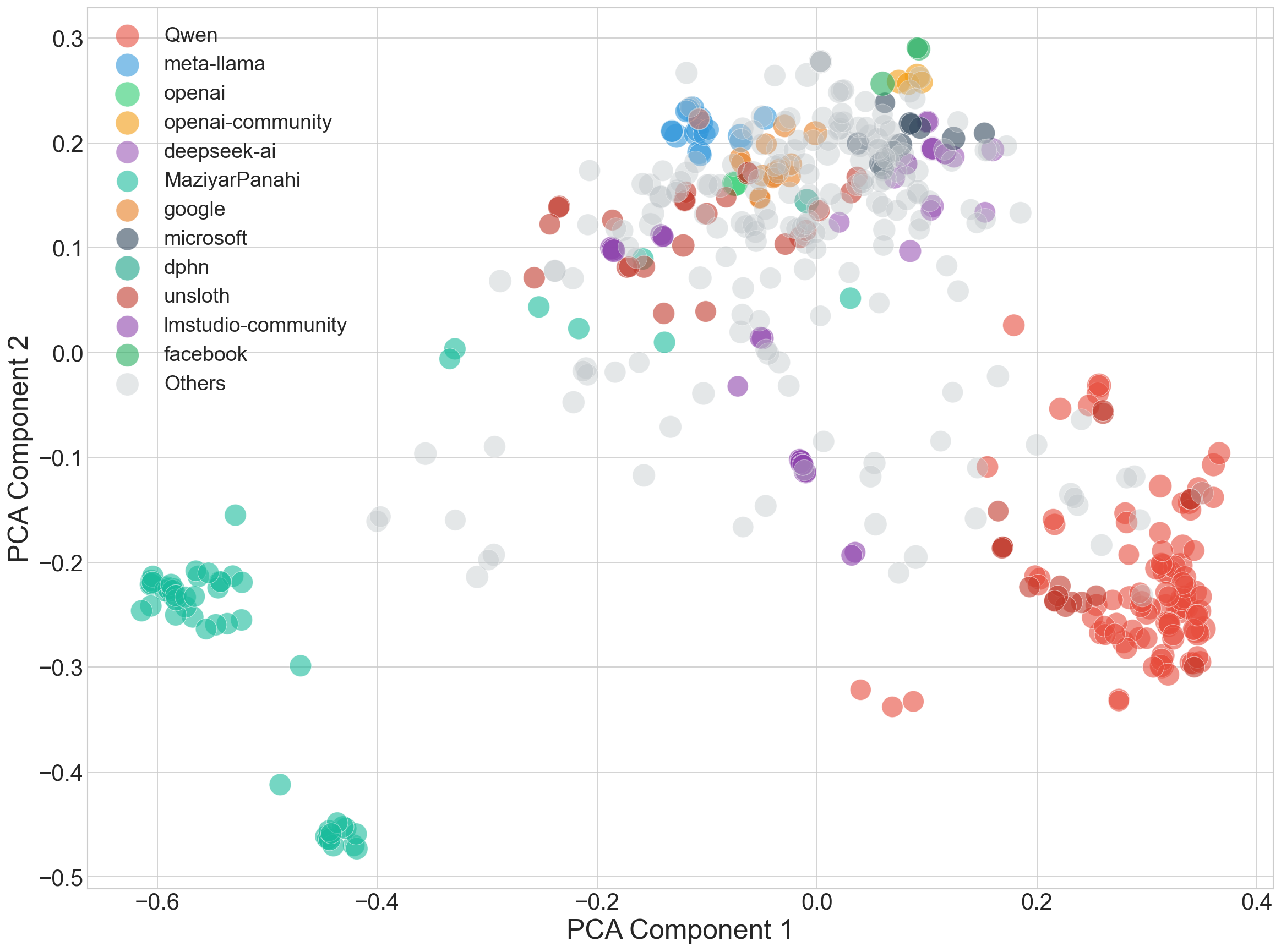

The paper audits the top 500 most-downloaded Hugging Face models as of January 11, 2026, asking which governance artifacts are present. The results are summarized below.

| Governance artifact | Models | Share |

|---|---|---|

| Model Card | 464 | 92.8% |

| Safety-specific fields | 366 | 73.2% |

| Acceptable Use Policy (AUP) | 55 | 11.0% |

| Explicit License | 403 | 80.6% |

| All three artifacts | 55 | 11.0% |

The implication is stark. Nearly every popular model ships a model card, but the document with actual enforceability — the AUP — is present in only 11.0% of cases. The fraction of models that jointly publish all three artifacts is also 11.0%. Transparency is widespread; enforceability is essentially absent.

Four Structural Deficiencies

Building on the audit, the paper identifies four structural deficiencies.

1. Pervasive Incompleteness. Safety information is buried inside generic README prose rather than recorded in dedicated, structured fields. High keyword counts reflect volume of writing, not machine-readable governance data, so automated audit, aggregation, and policy mapping all fail.

2. AUPs Largely Absent. Only 11.0% of top-500 models attach an AUP, leaving providers without a binding mechanism to forbid downstream misuse.

3. Licensing Conflicts. 9.8% of models pair a permissive OSL such as Apache 2.0 with AUP-style use restrictions. Apache 2.0’s broad grant offers little room for use-based discrimination, so the two documents either contradict each other or carve out a non-permitted zone of uncertain legal force.

4. Structural Fragmentation. Among models that do include safety fields, only 62.2% are even loosely coordinated with the license text. Informational and legal governance are authored by different teams, in different vocabularies, with different liability assumptions. Downstream users cannot consistently determine which document controls.

Why Model Cards Alone Fall Short

The paper argues that these findings are not merely a compliance failure but a structural limitation of the model-card instrument itself.

Non-operational Safety Claims. Statements such as “this model has been trained to reduce discriminatory output” are not auditable. Without specifying evaluation datasets, thresholds, and failure modes in operational terms, the claim collapses into marketing prose.

Missing Provenance and Influence Disclosure. When provenance of training data, alignment data, and the strength and scope of safety fine-tuning is not disclosed, downstream users cannot reason about which alignment assumptions are baked into the model they reuse.

Why Current Open-Source Licenses Fall Short

The legal analysis surfaces parallel limitations of OSLs as instruments for OWFM governance.

Copyrightability Ambiguity. Apache 2.0 presupposes a copyrightable software artifact, but jurisdictions in the U.S., EU, and Korea have not converged on whether model weights themselves are copyrightable.

Derivative Work Boundaries. Fine-tunes, LoRA adapters, distillations, and quantized variants are all transformations of the original, but OSLs do not define which of these inherit the original license obligations.

Rights over Training Data. OSLs are silent on how rights and infringement liabilities in the training data propagate into the weights and their downstream uses.

Conflict with AUPs. Apache 2.0’s broad grant is fundamentally incompatible with use-based restrictions; bolting an AUP onto Apache 2.0 either weakens the AUP’s enforceability or undermines the artifact’s claim to “open source” under the OSI definition.

A Three-Layer Governance Framework

To address these failures jointly, the paper proposes a three-layer regime (TO-BE in the opening figure).

Informational Layer — Standardized Safety Card. The model card is restructured from prose README into a machine-readable structured document organized around three principles: (1) heritage transparency — full disclosure of training data, base model, and alignment lineage; (2) alignment provenance — what safety data, at which stage, with what intensity; and (3) operational safety evaluation — quantitative reporting of evaluation datasets, thresholds, and failure modes. The full Safety Card template is provided in Appendix IV.

Normative Layer — Standardized AUP. Rather than letting each vendor draft an AUP from scratch, a standardized template is adopted, supporting both integrated forms (Llama 3 AUP, Gemma TOU, OpenRAIL-M) and standalone forms (GPT-oss, Qwen Usage Policy), with a shared vocabulary of prohibited categories.

Legal Layer — OWFM-tailored License. The paper proposes an Apache 2.0 revision designed specifically for OWFMs. Key changes are (a) replacing Source/Object/Work with Model/Output, (b) moving copyright notices into the model card, (c) expanding the grant to cover training, distillation, and outputs, (d) incorporating the AUP as an exhibit to the license, and (e) adding a dedicated training-data section.

Counter-arguments

The paper engages three principal objections to its proposal.

Chilling Effect and Cost (§4.1). Critics worry that a more rigorous regime will price out small developers. The paper argues that hub-level shared templates and automation tools can drive marginal cost to near zero.

Self-Reporting Bias and Safetywashing (§4.2). Self-authored cards are vulnerable to manipulation. The paper proposes community monitoring and platform-level verification as complementary checks.

Enforcement Challenges (§4.3). Direct enforcement against downstream actors is hard. The paper advocates a multi-pronged response combining ex-ante friction, reputational mechanisms, and platform-level sanctions such as takedown and throttling.

Conclusion

Open-weight foundation models have driven both the openness and the rapid progress of modern AI, but they have also created a new governance problem: how to track responsibility once weights are public. Model cards laid the foundation for transparency, but they are not the destination of governance. This paper documents — through a 500-model audit of Hugging Face — four structural deficiencies of the current regime, and proposes a Three-Layer Governance Framework together with an OWFM-tailored license as a coherent remedy.

The contribution is not merely “better model cards” but the integrated argument that information, norms, and law must operate as a single regime for downstream governance to become enforceable in practice. As policy, law, and machine learning continue to converge, we hope this work supports the responsible evolution of the open-weight ecosystem.