What is Trustworthy AI?

“Trustworthy AI” refers to AI systems that can safely contribute to users and society. Such AI systems must be designed so that users can understand the AI’s decisions while maintaining a certain level of performance. At the same time, they must possess technical safety to operate reliably across various situations. As the application of AI expands into diverse fields, AI trustworthiness has become an important issue that must respect not only AI performance but also human ethical concepts.

Key Elements for AI Trustworthiness

Various documents[1][2][3][4] mention the elements of AI trustworthiness that AI systems should possess, but the elements presented in each document differ slightly. However, there are key elements that commonly appear across multiple documents. These are (1) Safety, (2) Accountability, and (3) Transparency. These are attributes that are essential for AI to achieve trustworthiness and are fundamental concepts for building trustworthy AI.

-

Safety: Safety means that AI systems are designed to operate reliably as intended. The stable operation of AI in real-world environments is a critical part of trustworthiness, and it is especially essential in domains where important decisions are made. It is important to design systems that can be protected from errors and external attacks, and to ensure stable operation even in unexpected situations.

-

Accountability: Accountability is the concept of addressing the importance of mechanisms for appropriately distributing the impacts of AI systems and providing adequate remedies. It includes the verifiability and reproducibility of the results produced by AI, and means ensuring that AI systems can comply with evaluation and audit requirements. Through this, harm is minimized via remedies and legal accountability for automated decisions made by AI in the near future. It is a means of earning public trust in AI and alleviating fears.

-

Transparency: Transparency is an element that helps users understand the process by which AI makes decisions. Transparency requires providing information about why AI made a particular decision, which is essential for building trust with users. If the AI model’s decision process can be visually revealed through explainability, and if users can understand how each variable influences the outcome, then users can trust AI even more.

Key Technologies for Trustworthy AI

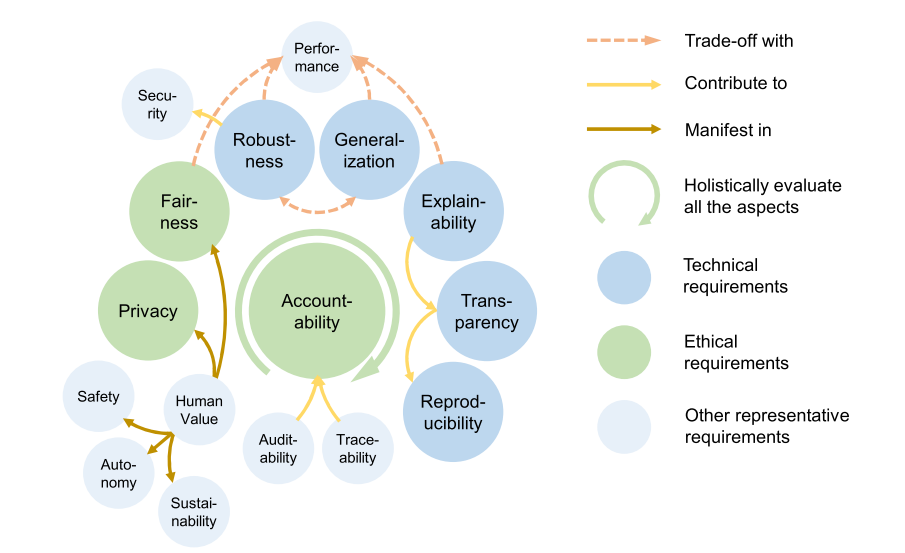

To achieve AI trustworthiness, relevant researchers have classified related technologies as follows.

Among these, our lab conducts research on Robustness, Generalization, and Explainability as the technical requirements for achieving the key elements of AI trustworthiness (Safety, Accountability, Transparency), which are at the core of domestic and international AI regulations and legislation.

Robustness

Robustness is the property that ensures an AI system can operate stably across diverse environments. Through robustness, AI provides stable results without significant performance degradation even when there are small variations in the data. To achieve this, diversified data training and scenario-based testing are conducted. These approaches help AI deliver trustworthy results across various situations.

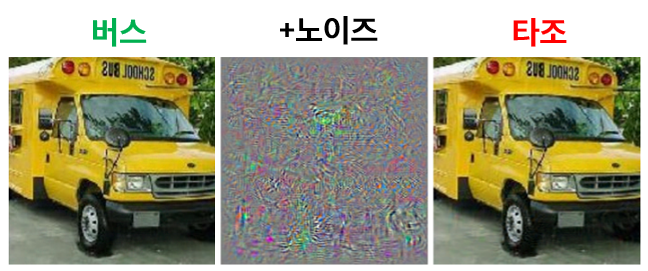

In practice, AI models generally lack robustness, and it is known that deploying them in this state makes them vulnerable to attacks. In particular, such attacks can be executed so subtly that they are indistinguishable to the human eye. In the figure above, adding the attack noise (center) to the normal image (left) produces the image on the right. To humans, both the left and right images are clearly a bus, but the AI correctly identifies the left as a bus while misclassifying the right as an ostrich.

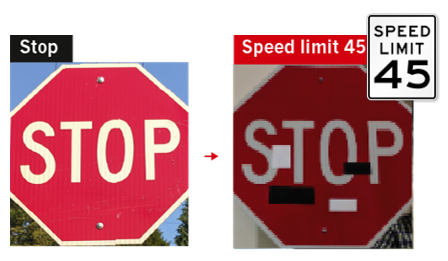

Such attacks can be exploited in real-world applications like autonomous vehicles. As shown in the image above, arbitrary stickers can be attached to road signs to trick the system into recognizing a stop sign as a minimum speed sign.

In other words, robustness is about “Does the AI model operate stably even under malicious attacks?” It is a factor directly related to the safety of AI models against possible internal and external attacks. Related techniques include adversarial attacks and adversarial training (adversarial defense).

Representative papers from our lab:

- Fantastic Robustness Measures: The Secrets of Robust Generalization [NeurIPS 2023] | [Paper] | [Repo] | [Article]

- Understanding catastrophic overfitting in single-step adversarial training [AAAI 2021] | [Paper] | [Code]

- Graddiv: Adversarial robustness of randomized neural networks via gradient diversity regularization [IEEE Transactions on PAMI] | [Paper] | [Code]

- Generating transferable adversarial examples for speech classification [Pattern Recognition] | [Paper]

Generalization

Generalization ability is the technology that enables AI to make trustworthy predictions on new data. It encompasses various learning methods that allow AI models to maintain stable performance in untrained environments, rather than relying solely on training data.

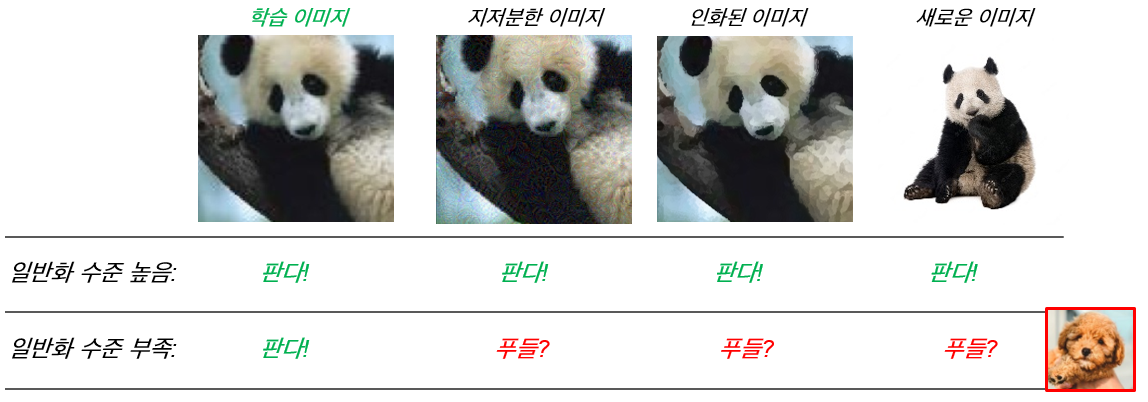

Humans correctly classify all the images above as pandas. AI with a high level of generalization can make similar judgments to humans. However, when the generalization level is insufficient, the model only correctly classifies training images while failing to properly recognize other data (out-of-distribution).

In practice, such lack of generalization frequently occurs when experimental data is biased. For instance, when training autonomous driving AI, if most videos and photos are taken on bright days, the system may fail to work properly in dark nights or rainy conditions.

In other words, generalization means “Can the AI model answer correctly for examples it has never encountered during training?” It is a core performance element for AI technologies, including deep learning, to operate more reliably. Related techniques include transfer learning, domain adaptation, and Sharpness-Aware Minimization (SAM).

Representative papers from our lab:

- Stability Analysis of Sharpness-Aware Minimization [Under Review] | [Paper] | [Article]

- Differentially Private Sharpness-Aware Training [ICML 2023] | [Paper] | [Code]

- Fast sharpness-aware training for periodic time series classification and forecasting [Applied Soft Computing] | [Paper]

- Compact class-conditional domain invariant learning for multi-class domain adaptation [Pattern Recognition] | [Paper]

Explainability

Explainability is the ability to explain the decision-making process and results of AI to users in an understandable way. Technologies are used to allow users to transparently understand the decisions made by AI. These technologies enhance the user’s understanding of the AI’s decision-making process and can explain how the AI’s decisions were derived.

Current AI produces good results but cannot clearly explain why it produced those results. Explainability overcomes this shortcoming of AI and addresses the question “Can the AI sufficiently explain its results to humans?” It is an essential technology for transparency as required by major domestic and international AI legislation, and a core element for AI to be utilized in high-risk domains such as finance and healthcare. Related techniques include LIME (Local Interpretable Model-agnostic Explanations), SHAP (SHapley Additive exPlanations), and Attention.

Representative papers from our lab:

- Are Self-Attentions Effective for Time Series Forecasting? [NeurIPS 2024] | [Paper] | [Repo]

- Outside the (Black) Box: Explaining Risk Premium via Interpretable Machine Learning [Under Review] | [Paper]

- Fair sampling in diffusion models through switching mechanism [AAAI 2024] | [Paper] | [Code]

Conclusion

Trustworthy AI must be designed based on transparency, safety, and accountability so that users can trust AI’s decisions. These elements enable AI to play a positive role in society while helping to reduce the inevitable risks that AI may pose. By building trustworthy AI through technical approaches such as robustness, generalization, and explainability, AI can develop in harmony with humans.

Trustworthy AI is no longer optional but essential. For AI to become deeply embedded in human life, trustworthiness and safety must be the top priorities, and the technical approaches to achieve this must continue to advance.